You are currently browsing the category archive for the ‘MATH8009’ category.

This strategy is by no means optimal nor exhaustive. It is for students who are struggling with basic integration and anti-differentiation and need something to help them start calculating straightforward integrals and finding anti-derivatives.

TL;DR: The strategy to antidifferentiate a function that I present is as follows:

- Direct

- Manipulation

-Substitution

- Parts

In this short note we will explain why we multiply matrices in this “rows-by-columns” fashion. This note will only look at matrices but it should be clear, particularly by looking at this note, how this generalises to matrices of arbitrary size.

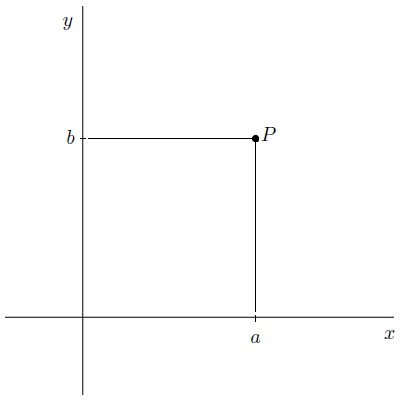

First of all we need some objects. Consider the plane . By fixing an origin, orientation (

– and

-directions), and scale, each point

can be associated with an ordered pair

, where

is the distance along the

axis and

is the distance along the

axis. For the purposes of linear algebra we denote this point

by

.

We have two basic operations with points in the plane. We can add them together and we can scalar multiply them according to, if and

:

, and

.

Objects in mathematics that can be added together and scalar-multiplied are said to be vectors. Sets of vectors are known as vector spaces and a feature of vector spaces is that all vectors can be written in a unique way as a sum of basic vectors.

Recent Comments