In this short note we will explain why we multiply matrices in this “rows-by-columns” fashion. This note will only look at matrices but it should be clear, particularly by looking at this note, how this generalises to matrices of arbitrary size.

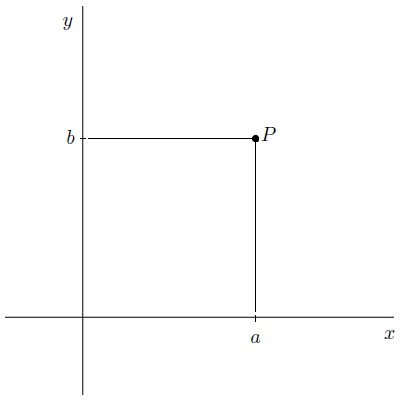

First of all we need some objects. Consider the plane . By fixing an origin, orientation (

– and

-directions), and scale, each point

can be associated with an ordered pair

, where

is the distance along the

axis and

is the distance along the

axis. For the purposes of linear algebra we denote this point

by

.

We have two basic operations with points in the plane. We can add them together and we can scalar multiply them according to, if and

:

, and

.

Objects in mathematics that can be added together and scalar-multiplied are said to be vectors. Sets of vectors are known as vector spaces and a feature of vector spaces is that all vectors can be written in a unique way as a sum of basic vectors.

In the case of the plane , the vectors

(one along the

) and

(one along the

) are basic vectors and the set

are said to be a basis for

. The dimension of a vector space is the size of the basis (bases are not unique but their size is) .Every vector

may be, in a unique way, be written as a sum of elements of

:

.

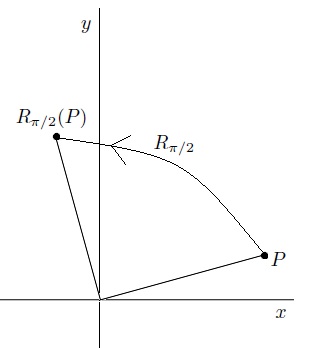

One of the first things to do when an algebraic structure is defined, in this case the plane, is to consider functions on it. A function is a map that sends each vector

to another

. For example, the function

that rotates a point

radians around the origin, in the anti-clockwise direction, is a function.

Of particular interest are linear maps. A linear map is a function between two vector spaces that preserves the operations of vector addition and scalar multiplication. In the case of functions , a linear map is any function

where

for any vectors

and scalar

. The quick calculation:

,

shows that a linear map is defined what it does to the basis vectors. Suppose that a linear map is defined, for scalars by:

, and

,

then we see that

.

Now it turns out that all this information can be encoded by a matrix as follows. Let

. Then

where

is a matrix given as follows:

If we take matrix multiplication to be as we define it then multiplying this out we see that the two of these are the same thing:

.

Therefore two-by-two matrices are actually functions in the sense that every linear map is of the form:

,

for some matrix

.

Another notation for is

— basically two copies of the real numbers. All finite-dimensional vector spaces, of dimension

, where the scalars are real numbers, are of the form

— basically a list of

numbers. It turns out that a matrix of size

(

rows,

columns) encodes a linear map

(note the switch from

to

).

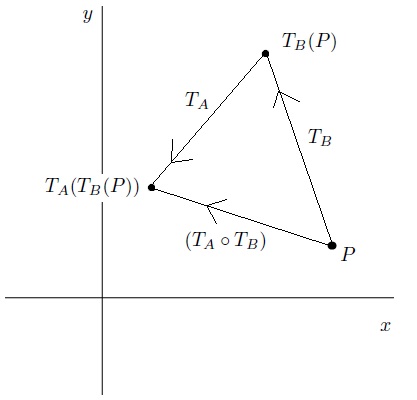

We can compose two functions to produce another. For example, consider two linear maps encoded by two

matrices

and

. Suppose we act on a point

first by

and then by

:

–

Now this composition is a function in itself, sending to

.

Now there are two questions. The map sending

to

… is it linear (yes, a straightforward exercise) and can we associate to

a single matrix, say

, such that

and

? The answer is also yes.

Let us write and define

and

by matrices

and

. Then

,

and so

.

Some careful inspection shows that this is nothing but, where is the

-th row of

, and

is the

-th column of

:

,

where this , called the dot product takes a pair of vectors and sends them to a scalar. In the case of vectors in the plane:

.

So the reason that we multiply matrices why we do is that the matrix product represents the function composition

.

6 comments

Comments feed for this article

October 5, 2017 at 9:18 am

MATH6040: Winter 2017, Week 4 | J.P. McCarthy: Math Page

[…] at Matrix Inverses — “dividing” for Matrices. This allowed us to solve matrix equations. Here find a note that answers the question: why do we multiply matrices like we […]

February 8, 2018 at 8:07 am

MATH6038: Spring 2018, Week 2 | J.P. McCarthy: Math Page

[…] For those of you interested in the why when it comes to matrix multiplication, have a look here. […]

October 4, 2018 at 9:25 am

MATH6040: Winter 2018, Week 4 | J.P. McCarthy: Math Page

[…] at Matrix Inverses — “dividing” for Matrices. This will allow us to solve matrix equations. Here find a note that answers the question: why do we multiply matrices like we […]

February 20, 2019 at 10:21 am

MATH6040: Spring 2019, Week 4 | J.P. McCarthy: Math Page

[…] Inverses — “dividing” for Matrices. This will allow us to solve matrix equations. Here find a note that answers the question: why do we multiply matrices like we […]

October 3, 2019 at 9:56 am

MATH6040: Winter 2019, Week 4 | J.P. McCarthy: Math Page

[…] to work and moments and began Chapter 2: Matrices. We did some examples of matrix arithmetic. Here find a note that answers the question: why do we multiply matrices like we […]

February 19, 2020 at 8:55 am

MATH6040: Spring 2020, Week 4 | J.P. McCarthy: Math Page

[…] Here find a note that answers the question: why do we multiply matrices like we do? […]